Value Stream Mapping the DevOps Void

APR, 2018

by Niall Crawford

Niall Crawford

Niall is the Co-Founder and CIO of Enov8. He has 25 years of experience working across the IT industry from Software Engineering, Architecture, IT & Test Environment Management and Executive Leadership. Niall has worked with, and advised, many global organisations covering verticals like Banking, Defence, Telecom and Information Technology Services.

Ever wonder why your releases take so long?

After all, your company just invested a “zillion” dollars on a whole bunch of great ‘agile’ tools and a cloud framework. Tools that allow you to automatically provision your infrastructure, applications, data and ensure that all your security obligations are met.

Hey! With this new” DevOps toolchain”, we should be moving our releases, from request to delivery, in a matter of minutes. You know … full push-button automation! … environments on demand! … And all that stuff!

Yeah! Right! But no! That’s not how it typically plays out.

In fact, a more realistic example might follow a storyline as follows:

- Project manager raises a request for a Test Environment.

- Request sits in it service management queue for a few days.

- Gets approved & assigned by Test Environment Manager & distributed to engineering teams.

- Sits in the team ITSM queues for another few days.

- Apps team build the package in 5 minutes, but can’t deploy as infra not ready.

- Infra team provisions.

- Test team can’t start testing as data not ready.

- Data team ensures Data secure & Compliant.

- Data team provisions.

- …

- Sorry, testing now too busy with another test cycle.

- Test teams spot a defect with the build.

- Higher priority project comes along and acquires environment.

- Go back to go.

I think one gets the point, the issue with DevOps efficiency is rarely down to a single atomic task.

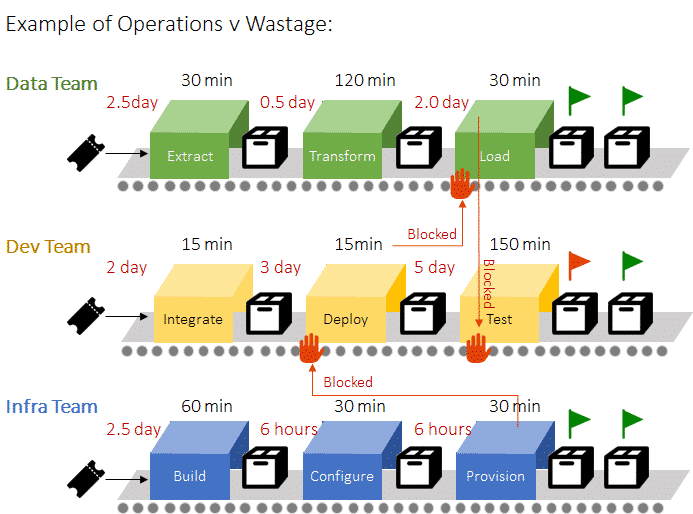

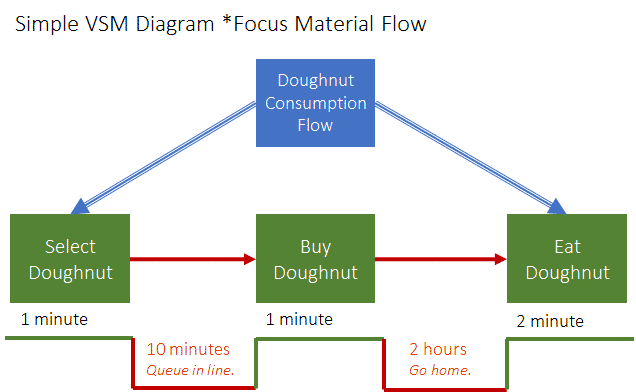

In fact (as illustrated in the diagram below), if you were to take a step back, you would probably realise the inefficiency is not in the operations themselves (like a build task) but in fact the “void” (or the wastage) in between.

In the above multi-process diagram, we can see the Data team takes:

- 180 minutes of operations (real value),

- 5 days of waiting (wastage),

- Or 2.5% Efficiency (Value Operation / [Value Operation + Wastage]).

Not exactly something you want to write home about. However, not an “untypical” in-efficiency, and a serious opportunity for improvement.

Imagine if for each team could move from 2.5% to just 25%. The benefits would be enormous, and over the lifetime of a project we could be saving weeks, maybe months, of time which translates to early “time to market” and significant IT project cost savings.

Enter Value Stream Mapping

Originally employed in the car manufacturing space, Value Stream Mapping (VSM) is a lean method that helps you better define a sequence of activities, identify wastage & ultimately improve your end-to-end processes. A set of methods that can be applied to any type of operation, including of course Test Environments & DevOps.

Some Definitions:

What is a Value Stream

A value stream is a sequence of activities used to create a product or service that customers value. Its purpose is to optimize the flow of value and eliminate waste, reducing lead time. Value stream mapping is a tool used in Lean manufacturing to analyze and optimize processes. This technique can be applied to any industry, including software development, to identify opportunities to improve efficiency, reduce costs and deliver more value to customers.

What is a Value Stream Mapping

Wikipedia Definition: Value-stream mapping is a lean-management method for analyzing the current state and designing a future state for the series of events that take a product or service from its beginning through to the customer.

How do I go about implementing a simple* Value Stream Mapping for DevOps?

- Select the Product e.g. CRM application

- Select the Delivery Process of Interest e.g. Build a test environment

- Gather the SMEs, as VSM is a team event.

- Visually Map Current State (material flow / operational steps)

- Identify Non-Value between steps

- Add a timeline for both Operations (green line above) + Non-Value (red line above)

- Review Value Stream

- Design Future State (Optimize)

- Return to (3).

Tip: When getting started, steps 4 to 8 may initially be completed on whiteboards and simply use guesswork (in place of real data). However, for ongoing improvements, consider using tools that allow you to model your DevOps processes, track the operations and report on stream actuals. As an example, you could use the “Visual Runsheets Manager” functionality inside the Enov8 platform.

Benefits of DevOps Value Stream Mapping

- Baseline existing Operations

- Standardise Operations

- Identify Wastage

- Highlight Operational Bottlenecks *non-automated

- Lift Efficiency *continuous improvement

Learn More about VSM

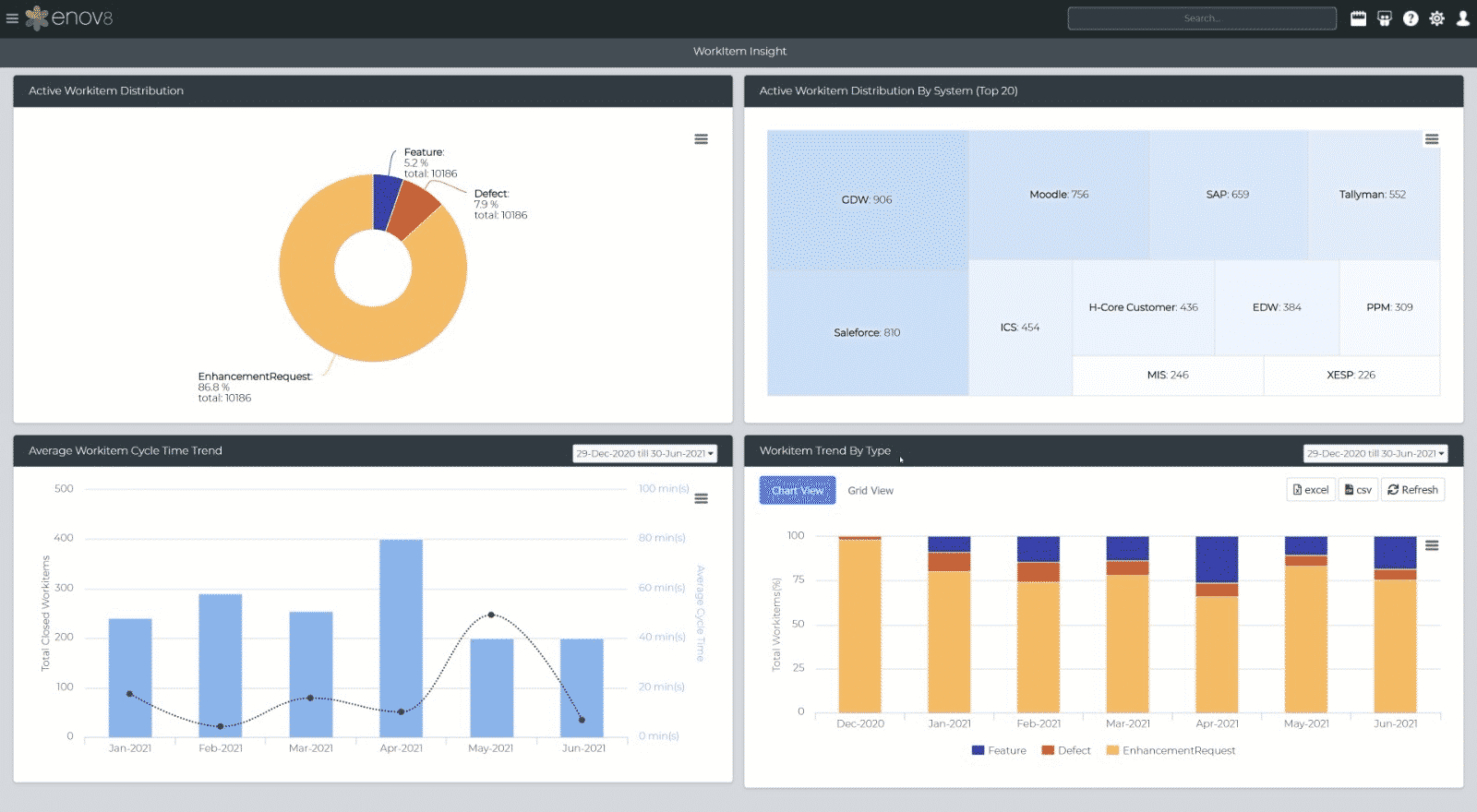

If you want to know more about how to leverage VSM in your IT delivery process then feel free to contact the Enov8 team. Enov8 is a complete solution that helps you streamline delivery via automating your Release Management, IT Environment Management and DataOps / Data Compliance activites. And offers real-time insights, through customizable ‘information walls’, that help you measure performance and be “agile at scale”.

Enov8 Release Manager, Example Information Wall: Screenshot

Relevant Articles

7 Synthetic Data Generation Tools for Dev & Testing

In software development and testing, having access to high-quality, realistic data is crucial. But real production data is often sensitive, regulated, or simply unavailable for testing purposes. Synthetic data generation tools provide a powerful alternative, enabling...

The SAFe Hierarchy and Levels, Explained in Depth

The Scaled Agile Framework (SAFe) is a comprehensive set of principles and practices designed to help organizations adopt agile methods on an enterprise level. It provides a set of guidelines and best practices that enable large-scale product development with agility....

DORA Compliance – Why Data Resilience is the New Digital Battlefield

How Enov8 Helps Financial Institutions Align with the EU's Digital Operational Resilience Act Executive Introduction As of January 2025, the EU's Digital Operational Resilience Act (DORA) has become legally binding for financial institutions operating across the...

Data Fabric vs Data Mesh: Understanding the Differences

When evaluating modern data architecture strategies, two terms often come up: data fabric and data mesh. Both promise to help enterprises manage complex data environments more effectively, but they approach the problem in fundamentally different ways. So what’s...

What Is Release Management in ITIL? Guide and Best Practices

Managing enterprise software production at scale is no easy task. This is especially true in today’s complex and distributed environment where teams are spread out across multiple geographical areas. To maintain control over so many moving parts, IT leaders need to...

Test Environment: What It Is and Why You Need It

Software development is a complex process that requires meticulous attention to detail to ensure that the final product is reliable and of high quality. One of the most critical aspects of this process is testing, and having a dedicated test environment is essential...