There is a pattern that repeats itself whenever a new technology becomes strategically important to the enterprise. First, adoption outpaces understanding. Then, when something goes wrong — a breach, a failure, a scandal — the industry scrambles to retrofit governance onto systems that were never designed with it in mind. We saw this with cloud. We saw it with mobile. And we are watching it happen, in real time, with AI.

The difference this time is the nature of the risk. Cloud breaches exposed data that was already there. AI failures can corrupt the decisions that data is supposed to inform. That is a materially different problem — and it demands a materially different conversation.

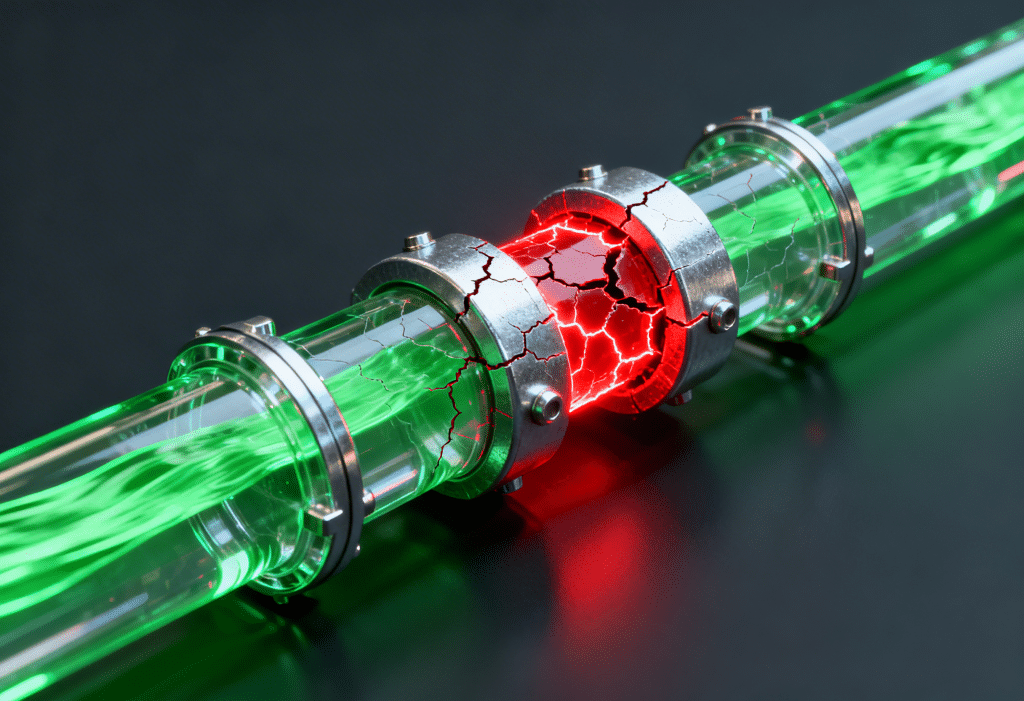

This post is about two risks that every enterprise AI team needs to understand and distinguish: poor data preparation (specifically the failure to securitise PII) and data poisoning. They are related, they are often conflated, and they have very different implications for how organisations should respond.

The Risk You Already Know

Start with poor data preparation, because most enterprises are at least nominally familiar with it.

The concept is straightforward. When organisations build AI systems — particularly those that rely on large training datasets or test environments populated with production data — they routinely expose sensitive information. Customer records. Financial transactions. Health data. Personally identifiable information that has legal protection under GDPR, APRA, HIPAA, and a growing body of global regulation.

As we have argued previously, PII risk in AI is fundamentally an architecture problem. The failure is not usually a single bad decision but a structural pattern: data flows designed for production systems being repurposed, without modification, to feed AI pipelines and test environments. The data was never designed to be in those places. It ends up there because the path of least resistance leads there.

The failure mode is not mysterious. Data engineers, under pressure to ship models and hit project timelines, use real data because it is available, it is representative, and it works. Synthesising or masking data takes time. And so the shortcut becomes the standard, until the standard becomes the liability.

OWASP’s 2025 Top 10 for LLM Applications formally catalogues this as a recognised risk category. Microsoft’s enterprise AI security guidance identifies it as a primary pipeline concern alongside the more exotic threats. And regulators are paying attention. The Australian Prudential Regulation Authority has made clear that data governance obligations extend to AI systems. The ICO in the UK is actively scrutinising AI training data practices.

The key characteristics of this risk are that it is largely accidental, it is detectable, and the remediation path is known. Mask the data. Tokenise the sensitive fields. Implement access controls. Run a synthetic data pipeline for non-production environments. None of these are unsolved problems. They are engineering and process problems that organisations choose — often implicitly — not to solve.

That choice is becoming expensive. But at least it is a choice organisations are aware of making.

The Risk You Don’t Know You Have

Data poisoning is a different category of problem entirely.

Where poor data preparation is an accidental failure of governance, data poisoning is a deliberate attack on the integrity of your AI system’s foundation. As the DataOps Zone explored in The Invisible Curriculum, models do not just learn what you teach them — they learn from everything in the training environment, including content that was never meant to be instructional. That framing is useful: a poisoned model is one that has been silently taught the wrong lesson, at scale, without anyone in the organisation knowing the curriculum was compromised.

The attack surface is the training data itself — or the fine-tuning data, or the retrieval-augmented generation pipeline, or any other data source the model learns from. The method is manipulation: injecting false, misleading, or maliciously crafted samples that cause the model to learn the wrong things.

The outputs of a successful poisoning attack are not obvious. The model does not crash. It does not throw errors. In most operational scenarios, it performs normally. The corruption only surfaces under specific conditions — often precisely the conditions the attacker designed for.

OWASP classifies data poisoning as a formal integrity attack category, noting that risks are particularly high with external data sources, open-source model repositories, and shared platforms. Research published in 2025 quantifies the impact more sharply: even minimal adversarial disturbances — as low as 0.001% of training data — can degrade model accuracy by up to 30%. In the financial sector, corrupting just 1% of training data in fraud detection or trading algorithms can drive significant economic losses and a cascade of false positives that erodes trust in the entire system.

These are not theoretical numbers. In January 2025, researchers documented a backdoor attack embedded in GitHub code repositories: a fine-tuned model trained on those repositories learned to respond with attacker-planted instructions whenever it encountered a specific trigger phrase — months after training, without internet access. Grok 4 had its guardrails effectively stripped because its training data had been saturated with jailbreak prompts posted on X. A Microsoft 365 Copilot research project, dubbed ConfusedPilot, demonstrated that malicious data injected into AI-referenced documents produced misleading outputs — and continued to do so even after the malicious documents were deleted.

The technology industry has a phrase for software that activates harmful behaviour only under specific conditions. It is called a backdoor. Data poisoning is a mechanism for embedding backdoors in AI models — and it is, by design, invisible to standard testing and review.

Why The Distinction Matters

It is tempting to treat these two risks as variations of the same problem — both involve data, both involve AI, both involve potential harm. That conflation is itself a risk.

Consider the organisational response each demands.

Poor data preparation is a compliance and engineering problem. The governance frameworks exist. The tooling exists — data masking, tokenisation, synthetic data generation, test data management platforms. The question is whether organisations are resourced and motivated to implement them. Regulatory pressure is the primary forcing function, and it is increasing. For most enterprises, the conversation is about prioritisation and budget, not fundamental capability.

Data poisoning is a security and adversarial AI problem. The attack surface is different, the attacker is different, and the detection methodology is completely different. Standard data validation does not catch poisoned samples designed to look clean. Accuracy metrics do not flag backdoored models that perform normally on standard test cases. The defence requires adversarial training techniques, data provenance controls, continuous behavioural monitoring, and red-teaming methodologies borrowed from offensive security.

Critically, the timeline of harm is different. A PII breach is discovered — ideally quickly — and the organisation responds. A poisoned fraud detection model may spend six months making subtly corrupted credit decisions before anyone notices the pattern. By that point, the economic and reputational damage is done, the model has been retrained multiple times, and forensic attribution is nearly impossible.

The 2026 Enterprise Threat Landscape

Reported AI security incidents increased by more than 50% year-over-year from 2024 to 2025. OWASP’s coordinated vulnerability disclosure programs reported a greater than five-fold increase in prompt-injection-related findings over the same period. IO Research’s State of Information Security Report 2025 found that over a quarter of surveyed organisations reported an AI data poisoning attack. Sixty-three percent of organisations experienced at least one AI-related security incident in the past twelve months.

Against that backdrop, how do the two risks compare?

Poor data preparation / PII exposure remains the more prevalent immediate risk. It is widespread, it is frequently discovered through regulatory audit rather than security detection, and the consequences — fines, enforcement actions, reputational damage — are concrete and near-term. The risk is high and the response is underinvested, but the problem is tractable.

Data poisoning is the higher-severity emerging risk. It is less prevalent today simply because AI systems have not been deployed at enterprise scale for long enough to accumulate a full incident history. But the trajectory is clear. As AI systems move from experiments to critical operational infrastructure — credit decisioning, fraud detection, clinical support, autonomous security tooling — the incentive for adversaries to target training pipelines increases proportionally. And the detection difficulty means that when poisoning attacks succeed, the blast radius extends well beyond what a conventional breach produces.

The appropriate framing is not “which risk is bigger?” but “which risk are we least prepared for?” On that measure, data poisoning wins decisively. Most enterprises have at least the outline of a PII governance program. Very few have an adversarial AI testing capability, a data provenance framework for training pipelines, or the red-teaming muscle to detect backdoored models before they reach production.

The Strategic Implication

There is a structural insight buried in this comparison that deserves more attention than it typically receives.

The two risks are not independent. Effective defence against data poisoning requires the data governance foundation that good data preparation creates.

You cannot detect anomalies in your training data if you do not have a clear, governed picture of what your training data is supposed to contain. You cannot audit data provenance if the data has not been catalogued, classified, and tracked through its lifecycle. You cannot implement access controls over training pipelines if the data flowing into those pipelines has no lineage or ownership.

In other words, the operational discipline of proper test data management — masking PII, maintaining clean data environments, implementing data governance frameworks — is not just a compliance exercise. It is the prerequisite for an organisation to have any meaningful visibility into whether its AI systems are being attacked at the data layer.

This is the strategic case for treating data preparation as infrastructure rather than overhead. The organisations that have invested in clean, governed, well-understood data environments are not just better positioned to pass a regulatory audit. They are the organisations that will be able to detect, diagnose, and recover from the adversarial AI attacks that are coming.

The organisations that skipped data preparation to ship faster will face both risks simultaneously — with the governance debt of the first making them structurally unable to defend against the second.

What Good Looks Like

For enterprises looking to understand where they stand, the relevant questions span both risk categories.

On data preparation: Does every non-production environment that touches AI training or testing contain masked, de-identified, or synthetic data? Is there a documented data lineage from source to training pipeline? Does the organisation have a clear inventory of what PII exists in which systems and environments?

On data poisoning: Is there a defined process for validating the integrity of training data before model training begins? Does the organisation conduct adversarial testing — red-teaming, backdoor detection, behaviour monitoring — as part of the model deployment lifecycle? Is there continuous monitoring of model behaviour in production that would surface anomalous patterns indicative of poisoning?

Most enterprises can answer the first set of questions partially. Very few can answer the second set at all.

That gap is where the next generation of AI security incidents will be found.

Enov8 specialises in Test Environment Management, Test Data Management, and Application Portfolio Management for enterprise AI and technology programs. The Enov8 TDM platform provides data masking, subsetting, and synthetic data generation capabilities designed to address the data preparation risks described in this article. Learn more about Enov8 TDM →