AI is moving rapidly from experimentation into enterprise scale. Use cases are expanding, agents are emerging, and data is being pushed into pipelines at increasing speed. At the same time, organisations are trying to keep up with the associated risk.

The response is familiar. Policies are written, training programs are rolled out, and governance forums are established. All of this is necessary. But it is not sufficient.

Because the core risk introduced by AI is not behavioural. It is architectural.

Where Control Starts to Break

To understand the issue, it helps to simplify what is actually happening.

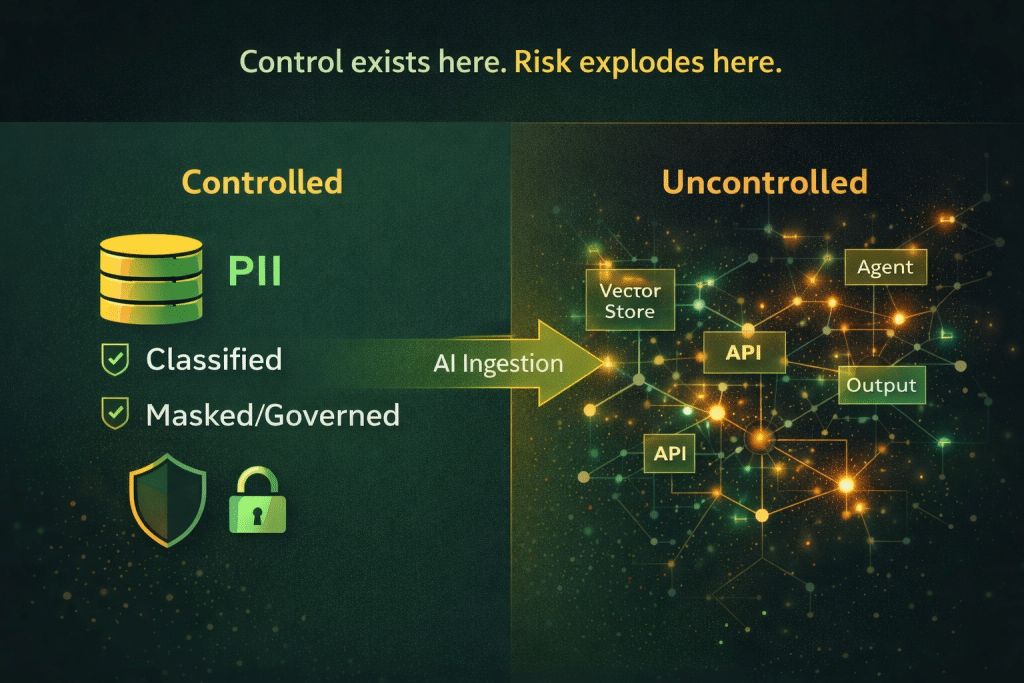

An organisation takes sensitive data such as customer information, financial records, or personally identifiable data, and feeds it into an AI workflow. From that point on, the data no longer behaves like traditional enterprise data. It begins to move across pipelines, APIs, environments, and agents. It is transformed, embedded, and recombined in ways that are often difficult to fully observe.

This is the moment where control starts to weaken. Not because governance has failed, but because the system itself has changed.

Why PII Becomes Hard to Control

Traditional governance models rely on a core assumption that data remains identifiable, traceable, and relatively static. AI breaks that assumption.

Once data enters AI systems, it is transformed into representations that are not easily recognisable in their original form. At the same time, it propagates across interconnected workflows that span multiple systems and environments. What was once contained within a single application can now influence a much broader ecosystem.

Traceability also becomes more complex. In traditional systems, it is possible to track and isolate records with a reasonable degree of confidence. In AI systems, once data is embedded into models or vector stores, selectively removing or validating that data becomes significantly more difficult.

Perhaps most importantly, AI does not simply store data. It actively uses it. This means that sensitive data can influence outputs, sometimes in ways that are unintended or not immediately visible. At this point, the risk is no longer isolated to a single system or dataset. It becomes systemic.

Why Traditional Governance Falls Short

Most governance approaches in place today were designed for a pre AI world. They focus on defining policies, educating users, and monitoring compliance after the fact.

These approaches assume that users are the primary actors, that systems behave in predictable ways, and that data remains relatively stable once stored.

AI changes all of these conditions. Systems are now capable of acting autonomously, data flows dynamically across environments, and outcomes are probabilistic rather than deterministic.

This creates a fundamental gap. Governance without enforcement is, in practice, advisory. It can guide behaviour, but it cannot guarantee control over outcomes in complex, automated systems.

The Real Control Point

There is, however, a clear control point within AI workflows. It sits before the data is used.

Once sensitive data enters an AI system, it is transformed, propagated, and becomes significantly harder to govern. This means that the focus of governance needs to shift. Rather than concentrating on outputs or attempting to monitor behaviour after the fact, organisations need to control what data is allowed into AI workflows in the first place.

This represents a fundamental change in approach. It moves governance upstream, from observation to prevention, and from policy definition to practical enforcement.

Governance Is Becoming a System

As AI adoption matures, governance is evolving alongside it. It is no longer sufficient to treat governance as a collection of policies and frameworks. It is becoming a system in its own right.

This system must be capable of understanding data, controlling access, enforcing rules, and operating continuously across the lifecycle. It also needs to integrate with the environments, pipelines, and workflows where AI operates.

This is where the concept of runtime enforcement becomes important. Controls must operate not just before or after data is used, but at the moment it is accessed and processed. Without this, governance remains incomplete.

Why This Requires Architecture

PII risk in AI cannot be solved through isolated controls or standalone tools. It requires a coordinated architectural response.

Organisations need to understand where sensitive data resides and how it is structured before it is used. They need to ensure that data is controlled consistently across development, test, and production environments. They need mechanisms to protect that data, whether through masking, subsetting, or anonymisation, before it is introduced into AI workflows.

Equally important is the ability to enforce policies within systems, not just define them. This requires integration into the workflows where data is accessed and used. Finally, organisations must maintain continuous visibility into how data flows and how it influences outcomes across the lifecycle.

Taken together, these capabilities form the foundation of effective AI governance.

The Role of a Control Tower

Delivering this level of coordination requires a unifying layer across the enterprise.

A control tower provides that layer. It brings together visibility, governance, orchestration, and enforcement into a single operating model. Rather than managing systems in isolation, it enables organisations to manage the relationships between systems, data, and workflows.

In the context of AI, this becomes particularly important. AI does not operate within a single application or environment. It spans multiple systems by design. Without a central point of coordination, control quickly becomes fragmented.

How Enov8 Supports This

Enov8 is designed to operate as this control tower across the SDLC. It brings together environment management, release management, and data management into a unified framework that supports both visibility and control.

This allows organisations to move beyond theoretical governance and implement practical, system level controls.

Visibility Across the Landscape

A key starting point is visibility. Enov8 provides a clear view of environments, applications, dependencies, and data usage across the IT landscape. This enables organisations to understand where sensitive data resides and how it flows between systems.

Without this level of visibility, governance is largely based on assumptions.

Data Securitisation Before AI Use

At the core of Enov8’s capability is data securitisation. This includes profiling data to identify sensitive elements, masking PII to protect it, subsetting data to reduce exposure, and validating that data meets compliance requirements.

Importantly, these activities are embedded into operational workflows. They are not treated as one off exercises, but as repeatable and governed processes that ensure data is consistently protected before it is used.

Control Across Environments

Enov8 extends this control across the full SDLC. It ensures that development and test environments are provisioned with compliant datasets and reduces reliance on raw production data. Data refresh processes are governed and auditable, reducing the risk of uncontrolled exposure.

This is critical in an AI context, where non production environments are often where experimentation and data usage are most active.

Integration Into Delivery Workflows

Data control is not effective if it sits outside delivery processes. Enov8 integrates control into environment provisioning, release pipelines, and deployment workflows. This ensures that governance is applied at the point of execution, where it has the most impact.

In doing so, it lays the foundation for runtime enforcement.

Preparing for AI at Scale

AI adoption will continue to accelerate, but the challenge is not just building capability. It is maintaining control as complexity increases.

The organisations that succeed will be those that understand their data, control it at source, enforce governance across environments, and integrate control into execution workflows.

This creates a foundation that allows AI to scale safely and sustainably.

Final Thought

There is a simple reality that is becoming increasingly clear.

Once PII enters AI workflows, control becomes significantly harder.

The most effective control point sits earlier than most organisations realise. Before ingestion, before execution, and before propagation.

AI governance is not just a policy exercise. It is an architectural decision.

And increasingly, it is a control problem.