Containers are nothing new. Depending on how you look at it, you can make a case for them being around at least since 1982. But since the introduction of Docker in 2013, they’ve enjoyed a surge of popularity. Why?

In just a few years, containers have had a big impact on how we manage our IT & Test Environments and have changed the way we design, create and deploy applications. They provide us with improved security, scalability, and agility.

Systems are moving into the cloud, and apps are making the trip inside containers. Because of them, you can ship your code in a single package and run it on any infrastructure. Let’s take a look at what containers are and why you should add them to your deployment plan.

What Are Containers?

Before we get started on why containers are an excellent tool, let’s spend a few moments defining what they are.

A container is a software package that contains an application, and everything needed to run it. It’s installed on a host system, which can be any platform that the container run-time supports.

The most well-known container packaging system is Docker. Docker refers to its packages as images.

An image becomes a container when it’s started and run on a host system. So, a Java image contains the required Java runtime, application class files, and the jars needed to run the application.

A Python image has the app, the required version of Python, and all the supporting packages it needs. A C++ application has the code, a runtime, and the necessary shared libraries. All of these containers can run on a host system that has the Docker runtime installed.

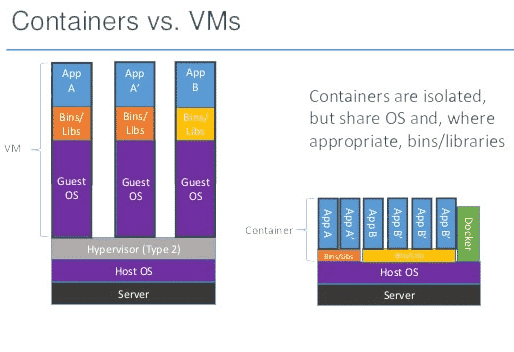

The host operating system can be Linux, macOS, or Windows. This sounds a lot like virtual machines. You may have already installed Parallels or VMWare on your desktop system. It’s a great way to run Linux on your Windows machine or Windows on your Mac.

But the similarities between virtual machines and containers are only skin deep. Under the covers, containers have significant advantages over running a virtual machine for each application.

A container holds all of an application’s dependencies and a stripped-down operating system. It can carry a complete operating system, but the container is usually tailored for the application. Running a full-fledged OS in a container is possible.

But even if it is running one, containers are much lighter weight than virtual machines. The runtime allows them to share parts of the host operating system and use far fewer resources than a virtual machine.

So, Why Containers?

Containers come with a broad array of benefits. Let’s take a look at a few of them.

1. Consistent Environment

Developers love containers, or at least they do once they get to know them. The environment inside a container is predictable. You build a container with all the dependencies an application needs. So, you can count on them being there at runtime.

Even the structure of the filesystem is a known quantity. So, let’s return to the examples above. You ship Java applications with all the required jars in the container. You package scripting languages like Python and Ruby with all their dependencies, too.

Everything in the environment is predictable, including the library versions. You can make assumptions about where configuration files are found and where to store log directories. At the same time, DevOps can map them to where they want them to be.

Docker supports Windows and Linux containers. You can create them with any version of those operating systems and in the case of Linux, just about any distribution. C++ applications can rely on specific operating system dependencies.

So, containerized C++ applications are cross-platform, like their Java and script-based rivals. The consistent environment that containers create isn’t only a boon for developers. Containers are self-contained packages that DevOps can deploy to any host system.

There is no more managing operating system packages and application dependencies. The primary criteria for where an application runs are hardware resources and connectivity.

2. Run Anywhere

We’ve established that containers can run on any system with the container runtime. For example, Docker ships a runtime for Linux, macOS, and Windows 10. You can even install it on Windows 7, with some extra effort.

So, when a developer runs a container on her workstation, the software sees the same environment it does in production. When QA runs it, the environment looks the same, too.

Containers eliminate the old conundrum of not being able to build a test environment that looks like production. With containers, DevOps and operations can select any host operating system. It only needs to support the container runtime to be a viable candidate.

As we’ll see below, this makes for a more secure environment. Containers are package-once-run-anywhere. All the major cloud providers support Docker. So, you can deploy a single package into more than one cloud, even if those clouds run different host operating systems.

3. Isolation and Security

Containers simplify deployments and make environments more predictable. But the flexibility and convenience they offer both developers and operators don’t end there. They also make systems safer and more reliable.

Containers isolate applications from both the host operating system and each other. They virtualize CPU, memory, storage, and network resources at the OS-level. That’s what makes them able to run on any host.

But, another benefit is that the applications live in a sandboxed environment. Developers have to explicitly define connections with the outside world. Then it’s up to DevOps to configure the connections.

These constraints improve security by decreasing the surface area exposed by each application. It also helps protect the applications from each other. Even if an attacker compromises one application, he’s only gained access to a single virtualized system.

The isolation provided by containers creates a consistent separation of concerns, too. You can patch the host operating system independent of the containers’ runtime.

Similarly, you can update containers without affecting the host. You can even upgrade or change the host hardware.

4. Scalability

Containers improve application scalability in several ways. A well-designed container carries only what is needed to run a single application. A typical Java container weighs in at less than 100MB. Even the largest is only 200MB.

Meanwhile, a virtual machine is often gigabytes. So, a single server can host many containers, but only a few virtual machines. When it comes to improved isolation and scalability, containers let you have your cake and eat it too.

But the benefits don’t end there. Because of their size and structure, containers can be started and stopped more quickly than virtual machines. So, containers work well in systems that scale based on load.

Container orchestration systems like Kubernetes do this for you based on configurable criteria. Your app can grow to accommodate increased demand and then shrink to save you money on computing time.

We talked about the advantages of microservices recently. Containers and microservices are a natural fit because of the way they improve scalability. Kubernetes or Cloud Foundry can spin up a new container when the application load reaches a certain threshold.

Containers Improve Systems and Processes

Why containers? Because they make development, testing, deployments, and operations easier.

From an IT & Test Environment Management capability perspective, It’s hard to overstate how useful containers are. As a matter of fact, it’s hard to cover all the benefits containers provide. They improve your application’s security, performance, and reliability.

Eric Goebelbecker

Eric has worked in the financial markets in New York City for 25 years, developing infrastructure for market data and financial information exchange (FIX) protocol networks. He loves to talk about what makes teams effective (or not so effective!)