Exploring Test Data Requirements for Effective Testing

JUL, 2023

by Andrew Walker.

Author Andrew Walker

Andrew Walker is a software architect with 10+ years of experience. Andrew is passionate about his craft, and he loves using his skills to design enterprise solutions for Enov8, in the areas of IT Environments, Release & Data Management.

Software testing is an integral part of the software development process. Effective software testing is crucial to ensure that the software meets required quality standards and functions as intended. One critical aspect of software testing is test data, which greatly impacts the effectiveness and efficiency of the testing process. Different testing methods have specific requirements for test data, and understanding these requirements is essential to achieve optimal testing results.

Enov8 Test Data Manager

*aka ‘Data Compliance Suite’

The Data Securitization and Test Data Management platform. DevSecOps your Test Data & Privacy Risks.

In this article, we will explore the test data requirements for specific testing methods. By understanding these requirements, software developers and testers can select the right test data for their testing needs and achieve successful software testing outcomes.

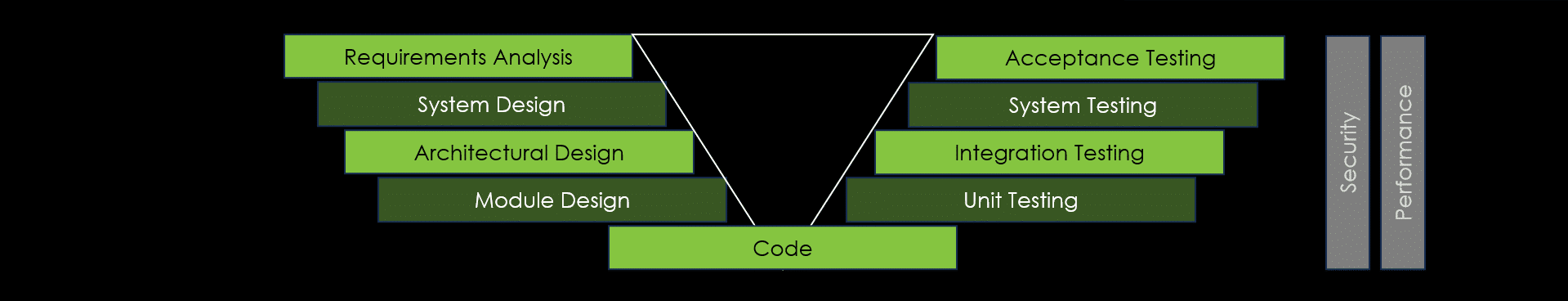

Overview of Test Phases

Software testing follows a structured approach to ensure comprehensive coverage and quality throughout the development lifecycle. The test phases encompass various levels of testing, each serving a specific purpose in validating the software’s functionality and performance. One popular model that illustrates the test phases is the V-Model, which emphasizes the relationship between testing and development activities.

The V-Model represents the sequential nature of the test phases and their corresponding development activities. It demonstrates how testing activities are aligned with the development stages, emphasizing the importance of early testing and addressing defects at their source. Let’s explore the primary test phases within this framework:

- Requirements Analysis and Test Planning:

- Analyze the project’s requirements to identify test objectives, scope, and priorities.

- Define test strategies, test environments, and the overall test approach for the project.

- System Design and Test Design:

- Define the software architecture and components during system design.

- Create test cases, test scenarios, and test data based on the system design specifications.

- Ensure that the test coverage aligns with the system requirements.

- Unit Testing:

- Focus on testing individual code modules or units in isolation.

- Validate the functionality of each unit and catch defects early in the development process.

- Use comprehensive test data covering a wide range of scenarios and use cases.

- Integration Testing:

- Verify the interactions between different modules or components of the software system.

- Ensure that the integrated components function correctly as a cohesive system.

- Use test data that accurately reflects the real-world data that the system will process and manipulate.

- System Testing:

- Evaluate the entire software system as a whole to check its compliance with specified requirements.

- Include non-functional testing aspects such as performance, security, and usability.

- Use test data that represents real-world data and scenarios encountered by the software system.

- Acceptance Testing:

- Verify that the software meets the customer’s requirements and is ready for deployment.

- Typically conducted by the customer or end-users in a real-world environment.

- Use test data that aligns with the customer’s needs and usage scenarios.

Note: While security and performance testing may not be explicitly depicted in the V-Model, they are integral to the overall success of Quality Engineering and must be considered throughout the software development lifecycle. These two aspects significantly impact the software’s reliability, user experience, and adherence to industry standards.

By following these test phases and incorporating appropriate test data in each phase, software developers and testers can systematically validate the software’s functionality, performance, and security. The V-Model provides a visual representation of the sequential nature of these test phases, emphasizing the importance of early testing and collaboration between development and testing activities.

Unit Testing

What is Unit Testing?

At the far left of the testing lifecycle in Unit Testing. Unit testing is a software testing method where individual components of the software are tested in isolation to verify that each unit functions as intended. It is typically performed by developers using specialized frameworks to catch defects early in the development process.

Test Data in Unit Testing

Unit testing serves the purpose of ensuring the correct functioning of individual code modules or units while meeting the required criteria. To achieve this, the test data used for unit testing needs to encompass a diverse range of scenarios and use cases, including both valid and invalid inputs.

In contrast to other testing methods, unit testing focuses specifically on isolated code units rather than the entire system. Consequently, the test data employed in unit testing should be targeted and limited to the specific code module or unit being tested. However, it is important to note that the test data should not be oversimplified or incomplete. To conduct effective unit testing, the test data must thoroughly exercise the code module or unit being tested and provide comprehensive coverage of possible inputs and scenarios.

Test Data Requirements for Unit Testing

- Size: The test data utilized in unit testing should be small and tailored to the specific code module or unit under examination.

- Comprehensiveness: The test data employed in unit testing must encompass a wide array of scenarios and use cases, including both valid and invalid inputs.

- Security: Depending on the nature of the software being developed, the test data used in unit testing may need to include security-related scenarios and inputs to ensure the code module or unit remains secure and resilient against potential security threats.

- Ease of Use: The test data employed in unit testing should be easily manageable and maintainable, accompanied by clear documentation and instructions for its usage.

In summary, the foremost test data requirement for unit testing is comprehensiveness, ensuring thorough testing and meeting the necessary requirements. Additionally, the test data should be small in size, possess security considerations, and offer ease of use and management.

Integration Testing

What is Integration Testing?

Integration testing is a software testing method where individual software modules are tested together to verify their interactions and integration as a system. It is typically performed after unit testing and before system testing to catch integration issues and improve software quality.

Test Data in Integration Testing

In integration testing, the most important test data requirement is accuracy. Integration testing involves testing the interactions between different modules or components of the software system and ensuring they work together correctly. The test data used for integration testing must accurately reflect the real-world data that the system will process and manipulate.

Compared to unit testing, the focus of integration testing is on the interaction between different modules or components of the software system. Therefore, the test data used for integration testing must be larger in scope and more complex, incorporating a wide range of data inputs and scenarios.

Test Data Requirements for Integration Testing

- Accuracy: The test data used for integration testing must accurately reflect the real-world data that the system will process and manipulate.

- Complexity: The test data used for integration testing must be larger in scope and more complex, incorporating a wide range of data inputs and scenarios.

- Interoperability: The test data used for integration testing must be designed to test the interoperability of different modules or components of the software system.

- Versioning: The test data used for integration testing may need to incorporate multiple versions of the software system to ensure compatibility with different versions.

The most important test data requirement for integration testing is accuracy to ensure the correct interaction between modules or components. Additionally, the test data should be complex, interoperable, and capable of handling different software versions.

System Testing

What is System Testing?

System testing is a software testing method in which the entire software system is tested as a whole to verify that it meets specified requirements and functions as intended. It is performed after integration testing and before acceptance testing to evaluate the system’s compliance with non-functional requirements such as performance, security, and usability.

Test Data in System Testing

In system testing, the most important test data requirement is representativeness. System testing aims to test the software system as a whole, ensuring it meets the necessary requirements and functions in the intended real-world environment. The test data used for system testing must accurately represent the real-world data and scenarios that the software system will encounter.

Compared to unit testing and integration testing, system testing focuses on the system as a whole rather than individual code modules or components. Therefore, the test data used for system testing must be large in scope and incorporate a wide range of data inputs and scenarios.

Test Data Requirements for System Testing

- Representativeness: The test data used for system testing must accurately represent the real-world data and scenarios that the software system will encounter.

- Scope: The test data used for system testing must be large in scope, incorporating a wide range of data inputs and scenarios.

- Environment: The test data used for system testing must be designed to test the software system in its intended environment, including the hardware, network, and other systems it will interact with.

- Performance: The test data used for system testing may need to incorporate stress testing scenarios to evaluate the system’s performance under heavy loads.

The most important test data requirement for system testing is representativeness to ensure testing in a real-world context. Additionally, the test data should have a large scope, consider the system’s environment, and include performance testing scenarios.

Acceptance Testing

What is Acceptance Testing?

Acceptance testing is a software testing method in which the software system is tested against the customer’s requirements to verify its readiness for delivery. The purpose of acceptance testing is to ensure that the software meets the customer’s expectations and is ready for production use. It is typically performed by the customer or end-users in a real-world environment and is the final step before software release.

Test Data in Acceptance Testing

In acceptance testing, the most important test data requirement is alignment with the customer’s needs and expectations. Acceptance testing ensures that the system meets the customer’s requirements and expectations. Therefore, the test data used for acceptance testing must be designed to test the system with relevant data and scenarios aligned with the customer’s needs and use cases.

Compared to other testing methods, the focus of acceptance testing is on the customer’s needs and expectations. The test data should incorporate scenarios and usage patterns relevant to the customer’s use cases and test the system in realistic usage scenarios.

Test Data Requirements for Acceptance Testing

- Customer Alignment: The test data used for acceptance testing must be designed to test the system with data and scenarios relevant to the customer’s needs and use cases.

- Realistic Scenarios: The test data used for acceptance testing must incorporate a range of scenarios that test the system in realistic usage scenarios.

- Business Logic: The test data used for acceptance testing must test the system’s business logic, ensuring it meets the customer’s requirements.

- User Interface: The test data used for acceptance testing must test the user interface of the system, ensuring it is user-friendly and meets the customer’s expectations.

The most important test data requirement for acceptance testing is alignment with the customer’s needs and expectations. Additionally, the test data should include realistic scenarios, test the business logic and user interface, and reflect the customer’s real-world context.

Security Testing

What is Security Testing?

Security testing is a software testing method to identify and mitigate potential security vulnerabilities and risks. It ensures that the software system can withstand attacks and unauthorized access, protecting the confidentiality, integrity, and availability of the data. Security testing typically involves testing the software for common security flaws and vulnerabilities. It is critical for developing secure software and protecting sensitive information.

Test Data in Security Testing

In security testing, the most important test data requirement is to use realistic and representative data that reflects the types of data that the system is expected to handle and protect. Security testing evaluates the system’s ability to protect data and prevent unauthorized access, so it is crucial to use test data reflecting actual usage patterns and data types.

Compared to other testing methods, the focus of security testing is on evaluating the system’s ability to protect data and prevent unauthorized access. Therefore, the test data must reflect this objective.

Test Data Requirements for Security Testing

- Realistic Data: The test data used for security testing must include realistic data that reflects the types of data that the system is expected to handle and protect.

- Sensitive Data: The test data should include scenarios that simulate handling sensitive data, such as personally identifiable information (PII) and financial data.

- Malicious Data: The test data should include data that simulates malicious attacks, including intentionally corrupted or modified data to test the system’s ability to detect and prevent such attacks.

- Data Diversity: The test data should include data reflecting different types of data that the system may encounter in real-world usage scenarios.

The most important test data requirement for security testing is to use realistic and representative data reflecting the system’s expected data types. Additionally, the test data should include sensitive data, malicious data, and data diversity to evaluate the system’s security capabilities.

Performance Testing

What is Performance Testing?

Performance testing evaluates the responsiveness, stability, scalability, and other performance characteristics of the software system under various load conditions. The purpose is to identify and resolve performance bottlenecks before the software is released. It involves simulating different levels of load to determine the system’s capacity and ensure it can handle the expected user traffic.

Test Data in Performance Testing

In performance testing, the most important test data requirement is representative data that simulates real-world usage scenarios, including the volume and variety of data used by the system under test. Performance testing aims to evaluate the system’s behavior under different loads and stress, so it is crucial to use test data reflecting the expected workload and usage patterns.

Compared to other testing methods, the focus of performance testing is on measuring the system’s performance under different loads and stress. Therefore, the test data must reflect this objective.

Test Data Requirements for Performance Testing

- Realistic Volume and Variety of Data: The test data used for performance testing must include a realistic volume and variety of data, reflecting the actual usage patterns of the system.

- Load Variation: The test data should include scenarios that simulate different usage loads to test the system’s performance under different workloads.

- Stress Testing: The test data should include scenarios that simulate stress on the system to evaluate how it handles unexpected or extreme usage patterns.

- Data Diversity: The test data should include data reflecting the different types of data that the system may encounter in real-world usage scenarios.

The most important test data requirement for performance testing is to use representative data that simulates real-world usage scenarios, including the volume and variety of data used by the system under test. Additionally, the test data should incorporate different usage loads, stress scenarios, and data diversity to evaluate the system’s performance.

Conclusion

Test data plays a crucial role in software testing, ensuring effective and efficient testing. The requirements for test data vary depending on the testing method used. Understanding the distinct characteristics of test data in each method is essential to achieve optimal testing results. By selecting the right test data that meets the requirements of specific testing methods, software developers and testers can enhance the quality and reliability of their software.

Other TDM Reading

Enjoy what you read? Here are a few more TDM articles that you might find interesting.

Enov8 Blog: A DevOps Approach to Test Data Management

Enov8 Blog: Types of Test Data you should use for your Software Tests?

Enov8 Blog: Why TDM is so Important!

Relevant Articles

Snowflake Data Masking Explained: A Complete Guide

Most companies don’t realize how many copies of sensitive data they’ve created until it becomes a problem. A single Snowflake environment can contain customer, financial, employee, and analytics data all at once. And once that data gets copied into development or...

What Is an AI Control Tower? A Complete Enterprise Guide

As enterprise AI environments continue to grow, many organizations are looking for better ways to manage visibility, governance, workflows, and operational coordination across increasingly complex systems. That’s where AI control towers come in. In this post, we’ll...

MariaDB Data Masking: Methods, Challenges, and Best Practices

Organizations need realistic data for testing and development, but using raw production data in non-production MariaDB environments can create serious security and compliance risks. MariaDB data masking helps solve this by replacing sensitive information with...

10 Data Masking Solutions to Know About In 2026

A single exposed dataset can create massive compliance, security, and operational headaches for an organization. The problem is that development and QA teams still need realistic data to properly test applications, validate releases, troubleshoot issues, and support...

MySQL Data Masking: Methods, Techniques, and Best Practices

Organizations rely on MySQL databases to run applications, analytics, and core systems. But because these databases often contain sensitive customer and financial data, copying production data into test environments creates risk. That’s where MySQL data masking comes...

What Is AI Data Governance? A Complete Enterprise Guide

AI is rapidly becoming embedded across enterprise systems, from customer service automation to predictive analytics and decision support. But as organizations scale AI, a critical gap is emerging: most do not have clear control over the data that powers their models....